OpenAI Expands Trusted Access Program For Cyber Defense AI Tools

The company will allow vetted users to access GPT-5.4-Cyber for controlled cybersecurity testing under its Trusted Access for Cyber program.

OpenAI is expanding access to its latest cybersecurity AI model, GPT-5.4-Cyber, through a formal vetting program, allowing qualified users to test and strengthen cyber defenses while restricting broader use.

In a blog post, the company said that cybersecurity teams can access GPT-5.4-Cyber via its Trusted Access for Cyber (TAC) program, which vets parties by evaluating their intended use, security controls, and alignment with defensive objectives.

The statement said that GPT-5.4-Cyber can accelerate vulnerability discovery, but noted that similar capabilities could also be used to identify and exploit software weaknesses. The company said the TAC program is designed to reduce misuse by limiting access to vetted users.

OpenAI will monitor usage and apply restrictions to prevent the system from being used for offensive or unauthorized activities.

OpenAI’s announcement follows Anthropic’s recent launch of Project Glasswing, a partnership initiative with 40 companies from the AI and cybersecurity industries to sandbox Anthropic’s Mythos agent. Anthropic said that Mythos was so effective at finding cyber vulnerabilities that access had to be limited and the product thoroughly vetted before considering giving general access.

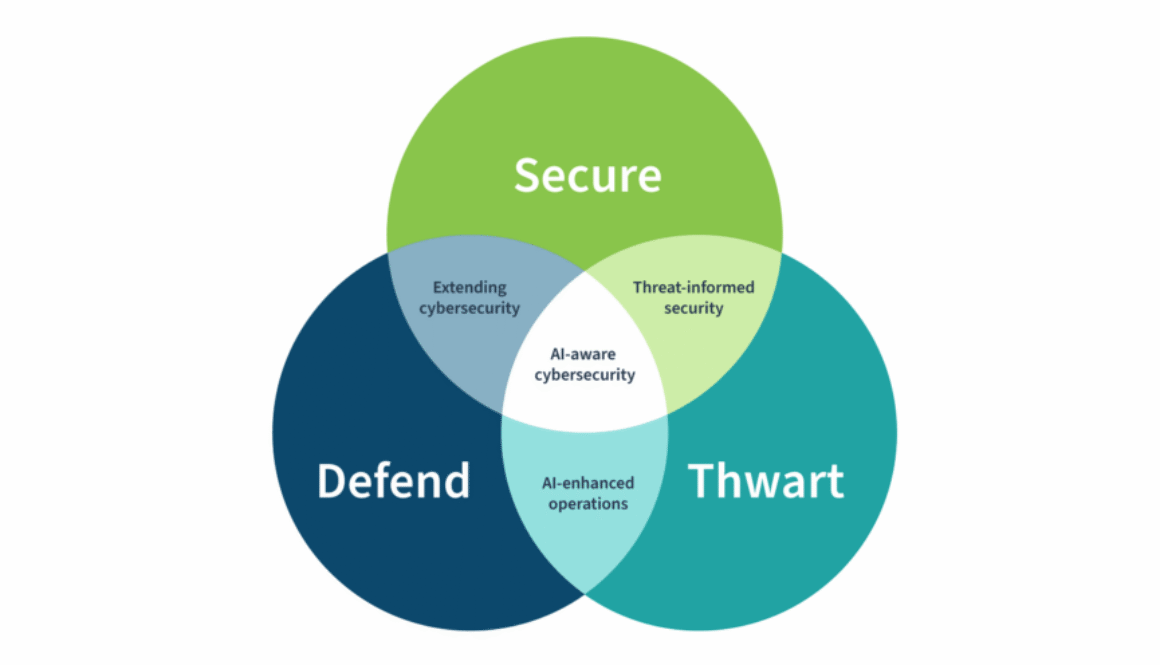

OpenAI is positioning the initiative as striking a balance between providing cybersecurity tools and giving access to potential bad actors.