The NSA is actively testing Anthropic’s Mythos while the White House engages with Anthropic leadership about the latest AI tool and future collaborations.

According to an Axios report, the National Security Agency (NSA) is actively evaluating Anthropic’s “Mythos” AI system, testing the company’s latest AI model offering to identify software vulnerabilities and strengthen defensive capabilities. Anthropic recently limited the release of Mythos to a handful of large-scale AI and cybersecurity partners, including the NSA, for testing. They claim it’s so good at identifying cyber risks that it could do serious harm in the wrong hands.

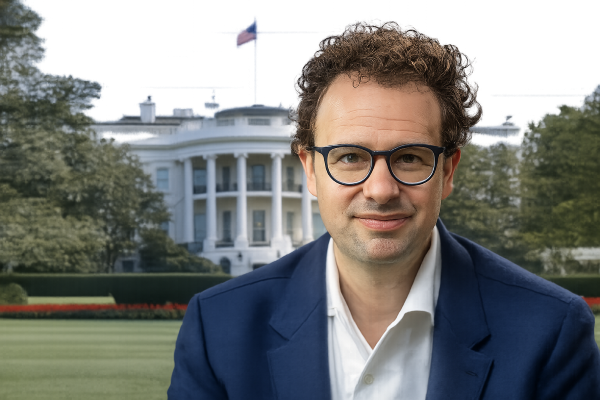

Concurrently, the White House held direct talks with Anthropic’s CEO, Dario Amodei, about Mythos and how the “U.S. government can work together on key shared priorities such as cybersecurity, America’s lead in the AI race, and AI safety.” [source: AP].

The talks came on the heels of the Pentagon’s recent designation of the company as a supply chain risk, a designation typically held for potentially hostile foreign actors. Anthropic recently challenged the designation, winning a temporary injunction at a San Francisco Federal Court.

The designation stemmed from an argument between the Department of War and Anthropic, in which the former sought to employ Anthropic’s Claude AI tool for “all lawful purposes,” while the AI giant needed assurances that the AI agent would not be used for the surveillance of American citizens or the development of autonomous weapons.